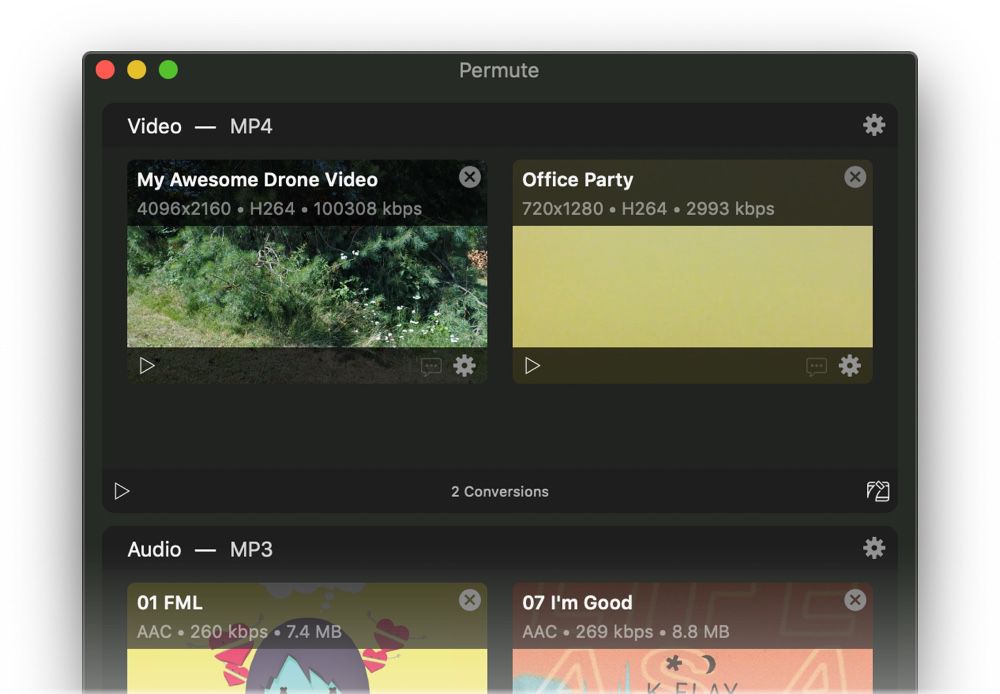

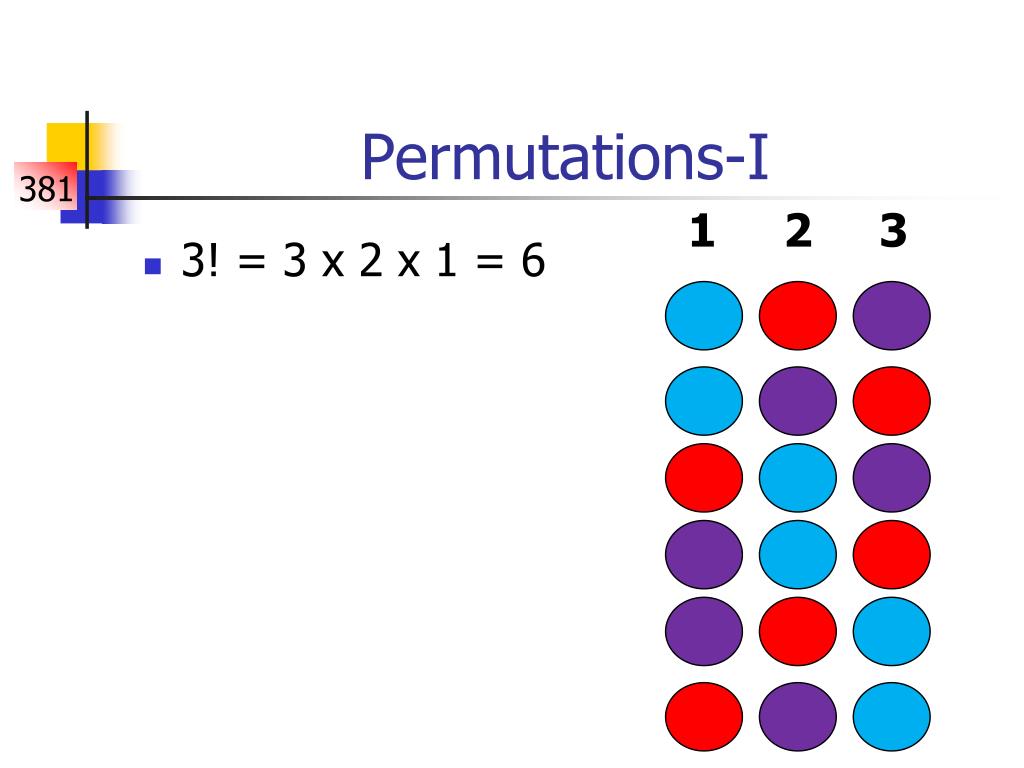

Interpreting regression coefficients requires great care and expertise landmines include not normalizing input data, properly interpreting coefficients when using Lasso or Ridge regularization, and avoiding highly-correlated variables (such as country and country_name). We recommend using permutation importance for all models, including linear models, because we can largely avoid any issues with model parameter interpretation. This technique is broadly-applicable because it doesn’t rely on internal model parameters, such as linear regression coefficients (which are really just poor proxies for feature importance). It directly measures variable importance by observing the effect on model accuracy of randomly shuffling each predictor variable.

Permutation importance is a common, reasonably efficient, and very reliable technique. In fact, the RF importance technique we’ll introduce here ( permutation importance) is applicable to any model, though few machine learning practitioners seem to realize this. Most random Forest (RF) implementations also provide measures of feature importance. Feature importance is the most useful interpretation tool, and data scientists regularly examine model parameters (such as the coefficients of linear models), to identify important features.įeature importance is available for more than just linear models. For example, if you build a model of house prices, knowing which features are most predictive of price tells us which features people are willing to pay for.

Training a model that accurately predicts outcomes is great, but most of the time you don’t just need predictions, you want to be able to interpret your model. We’ll conclude by discussing some drawbacks to this approach and introducing some packages that can help us with permutation feature importance in the future. Then, we’ll explain permutation feature importance and implement it from scratch to discover which predictors are important for predicting house prices in Blotchville. We will begin by discussing the differences between traditional statistical inference and feature importance to motivate the need for permutation feature importance. Permutation feature importance is a powerful tool that allows us to detect which features in our dataset have predictive power regardless of what model we’re using. This article will explain an alternative way to interpret black box models called permutation feature importance. However, one drawback to using these black box models is that it’s often difficult to interpret how predictors influence the predictions - especially with conventional statistical methods. Deep learning models like artificial neural networks and ensemble models like random forests, gradient boosting learners, and model stacking are examples of black box models that yield remarkably accurate predictions in a variety of domains from urban planning to computer vision. As the name suggests, black box models are complex models where it’s extremely hard to understand how model inputs are combined to make predictions. Introduction to Feature ImportanceĪdvanced topics in machine learning are dominated by black box models. In addition, your feature importance measures will only be reliable if your model is trained with suitable hyper-parameters. For R, use importance=T in the Random Forest constructor then type=1 in R’s importance() function. To get reliable results in Python, use permutation importance, provided here and in the rfpimp package (via pip). The scikit-learn Random Forest feature importance and R’s default Random Forest feature importance strategies are biased.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed